Content-disposition: Archival – Repatriating Dates on Access to Born-digital Records Online

Abstract: Downloading an object over the internet through a standard web-browser is a mechanism that is ‘less-than-optimal’ for the delivery of archival objects. Download of objects will not preserve the file-system metadata of the object. Tools like Wget can do this, but do we want the same behavior of the browser? On answering that, do we also need to create mandatory new requirements in future digital preservation systems? The repatriation of modified dates with born-digital records, for example? Or the maintenance of that information with the object across its lifetime; from deposit through to delivery? This blog entry attempts to ask this trail of questions, each of which we would need to solve one-by-one, before asking the reader – what behavior do you see, or have you implemented in your digital preservation system? – What behaviors do you think are important?

Red Shift…

It’s a small thing, something at the infra-red end of the spectrum of digital preservation or digital archiving – deep in the bowels of the minutiae that, perhaps, in-general, we’re not trying to solve each day. Find a file on the internet – maybe even via your own catalog – download it and then look at the file-properties in your operating system – what date does it say? – When did it first get put into the repository? The last-modified date of any digital object, while not the definitive measurement of whether a file has changed or not, is often the first, and only clue we need in a business environment to understand if a file has been modified or not (an environment where we’re not considering digital preservation or the potential for digital forgeries, daily).

What happens when we deposit a file into a digital preservation system?

A number of things will happen to an object along a standard digital preservation ingest workflow. The original modified date will be recorded in system metadata. This is how your end user will casually assure themselves that the file is what they expect it to be – that it hasn’t been modified since the depositor last did. A checksum will also be generated and recorded that will demonstrate definitively that the object they are looking at is the same as was transferred into the organization’s custody.

In some digital preservation systems a filename will be given to the object on ingest. What we see on delivery is often the original filename repatriated with that object on request. In other systems a catalog reference may be used for the object on delivery and so the original title is not re-associated.

It will depend on the requirements of your system and how they’ve been encoded in technology.

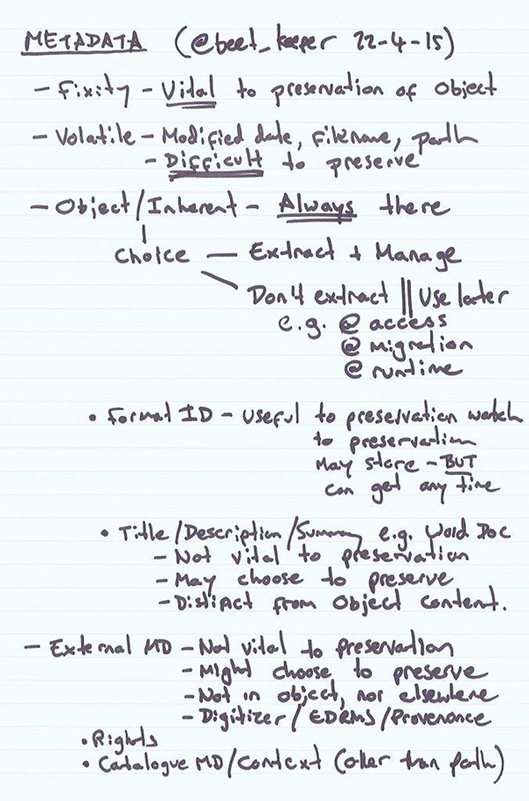

What’s the difficulty with some metadata?

The modification date of a file is volatile metadata – volatile in a sense that it is file-system metadata and it changes with every addition to a file, i.e. a file is edited and the checksum changes. It is also volatile in a sense that unless you are looking specifically to preserve this information it can easily be lost. Tools like rsync exist, the -t, –times flag transfers file system dates along with the object during a data transfer to a remote system.

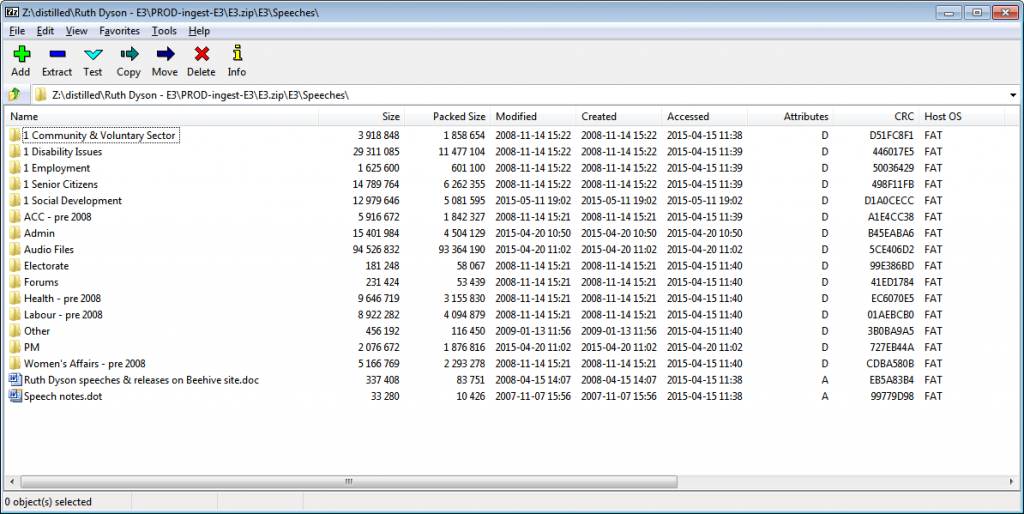

To preserve this data within our long-term preservation system, Rosetta, we have to extract it using DROID from The National Archives, UK and supply it in the submission information package (SIP). Though ZIP files (the mechanism we use to package the objects in the SIP) can maintain this information too – and so it does arrive in the system at one point:

Documented in PKZIP, https://gist.github.com/ross-spencer/386965c55359d86e7c59#file-pkzip-appnote-6-3-4-L1167 and https://gist.github.com/ross-spencer/386965c55359d86e7c59#file-pkzip-appnote-6-3-4-L1219

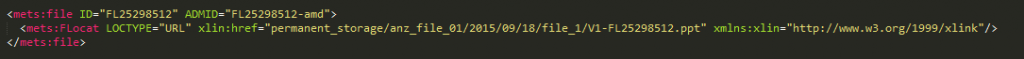

In our system, once deposited, a file takes on new metadata, including a new filename. The information is stored in the system’s metadata object for the file, (METS with Rosetta ‘DNX’ technical extensions):

In this case, the filename in the system is the same as its persistent identifier in the system FL25298512, the name appended with the extension .ppt.

Its new modified date can be seen in the object characteristics – this date aligns with the fact that this file was deposited 18 September 2015.

The object’s original file-system metadata is mapped into the General File Characteristics section of the metadata object:

This file was deposited as ‘Digital Future Summit-video.ppt’ and was last modified 23 November 2007. After deposit, the file becomes intrinsically linked to the preservation system’s metadata (Note: The file may have been deposited with other metadata, e.g. from an EDRMS) – the volatile file-system metadata of this object is now externalized.

What happens to the object now?

We’ll jump to the end of the process – further management and preservation happens but is out of scope for this blog n.b. <!–preservation happens here–> – the two parts of the process that I want to focus on for this entry are deposit and delivery.

The record I’m using in my examples has the catalog ID: R24991813.

When we access the record, the server (the Rosetta delivery mechanism, linked to from our catalog) returns the following header, (To inspect them, I’m using Live HTTP headers for Chrome, which is also available for Opera. Wireshark is also a good, but more powerful alternative):

| Request | |

| GET /delivery/StreamGate?is_rtl=false&is_mobile=false&dps_dvs=1446352930373~46&dps_pid=FL25298512 HTTP/1.1 | |

| Host: | ndhadeliver.natlib.govt.nz |

| Accept: | text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8 |

| Accept-Encoding: | gzip, deflate, sdch |

| Accept-Language: | en-GB,en-US;q=0.8,en;q=0.6 |

| Cookie: | JSESSIONID=BBF5AE7B3C021FB9273D6165B6713CDB.henga.natlib.govt.nz:1801; NDHASLB03=henga; visid_incap_227072=v22czd0QS3mSpWWDi78hfNyXNVYAAAAAQUIPAAAAAAAi3w7IsOBPtwIZQuaPprjO; incap_ses_248_227072=xwH6N1y0Gk/MNf/nYhNxA92XNVYAAAAAc57x4anP9khOsY2h2vk1Tg== |

| Referer: | http://ndhadeliver.natlib.govt.nz/view/action/ieViewer.do?is_rtl=false&is_mobile=false&dps_dvs=1446352930373~46&dps_pid=IE25298510 |

| Upgrade-Insecure-Requests: | 1 |

| User-Agent: | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Ubuntu Chromium/45.0.2454.101 Chrome/45.0.2454.101 Safari/537.36 |

| Response | |

| HTTP/1.1 200 OK | |

| Accept-Ranges: | bytes |

| Age: | 0 |

| Cache-control: | must-revalidate |

| Connection: | Keep-Alive |

| Content-Description: | File Transfert |

| Content-Disposition: | inline;filename=”Digital Future Summit-video.ppt” |

| Content-Length: | 21549568 |

| Content-Transfer-Encoding: | binary |

| Content-Type: | application/vnd.ms-powerpoint;charset=UTF-8 |

| Date: | Sun, 01 Nov 2015 04:42:13 GMT |

| Expires: | 0 |

| Server: | |

| X-CDN: | Incapsula |

| X-Iinfo: | 1-906050-905876 SNNY RT(1446352930735 2262) q(0 0 0 -1) r(2 2) U5 |

| The request and response is pasted, as-is, including typographical errors at the time of writing. | |

The response is of most interest; first, it attempts to display the content inline:

Content-Disposition: Inline

It is unlikely that the majority of browsers will be able to display this content, and so in most cases, a download dialog will appear.

filename=”Digital Future Summit-video.ppt"

The second parameter of the Content-disposition string is responsible for repatriating the object with the filename recorded in the digital preservation metadata. In this case replacing the preservation system adopted filename, ‘FL25298512.ppt’ with ‘Digital Future Summit-video.ppt’.

The user will receive an object, its created date, and its modified date, will be set to the day of download:

This is not the object we put into the system, at least something has happened to it, however slight. Perhaps a more appropriate phrase is that this is not same wrapper that belonged to the original file on deposit.

Is this the solution we want?

Without focusing on any preservation system in particular, I have been attempting to try and understand the life-cycle of a digital object from ingest through to delivery to try and understand requirements.

- I want to understand if it’s the preservation system’s responsibility to automatically record the data attached to an object given to it, and where it needs to record it, i.e. is it data that we provide the SIP ourselves or should it be extracted by default (automatically)?

- Does the system have a responsibility to record provenance for itself, i.e. record the state of the object as it was received so it can be compared to the SIP and the processes enacted on the archival object?

- To expand more – for provenance, if a filename changes in system – have the system acknowledge in metadata that it has changed the filename, if a datetime change occurs (as in this case), have they system acknowledge in metadata that it has changed the datetime, and the reasons for why.

- I want to understand if it’s the preservation system’s responsibility to give back to the user, the object, as it was deposited

We control as much of the environment surrounding a physical archive as possible – preventative conservation – why might we not do this for a digital archive? – Perhaps the specific use-case I’m questioning here is borne out of a lack of control over our web browser?

Browser Limitations

There is a thread in the Firefox Bugzilla database that was discussed over ten years from 2002 to the year 2012: https://bugzilla.mozilla.org/show_bug.cgi?id=178506 the most recent update is that it is RESOLVED WONTFIX.

The behavior of Firefox is consistent with other browsers: As we saw above. When you attempt to download a file over the internet the date it will arrive on your PC with is today’s date, both for creation and for modification.

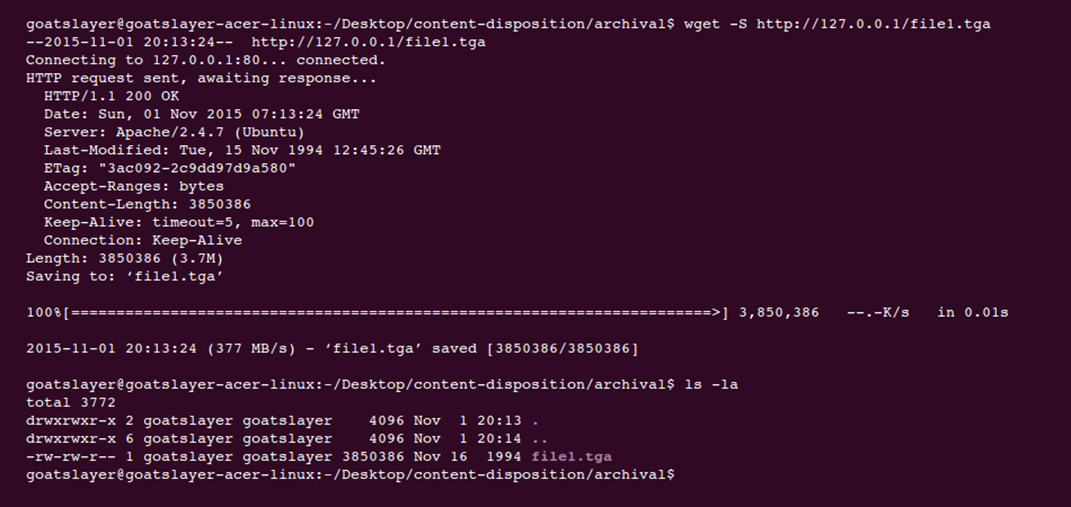

The Linux utility Wget, referenced by different users in the Firefox ticket by default enables download of objects with the date that belongs to the object on the server:

--no-use-server-timestamps

Don't set the local file's timestamp by the one on the server.

By default, when a file is downloaded, its timestamps are set to match those from the remote file. This allows the use of --timestamping on subsequent invocations of wget. However, it is sometimes useful to base the local file's timestamp on when it was actually downloaded; for that purpose, the --no-use-server-timestamps option has been provided.

The tool’s documentation suggests that it has step to repatriate the modified date with the object after download.

Change through experimentation?

I wanted to be sure that this was a browser limitation, to try on other browsers, and to see if I could influence the behavior to see if there was a configuration on the web-server that I had been missing. I also wanted to generate the evidence required to demonstrate to the vendor the possible configurations that could be provided to users to have greater control over delivery, including potential delivery of the modification date.

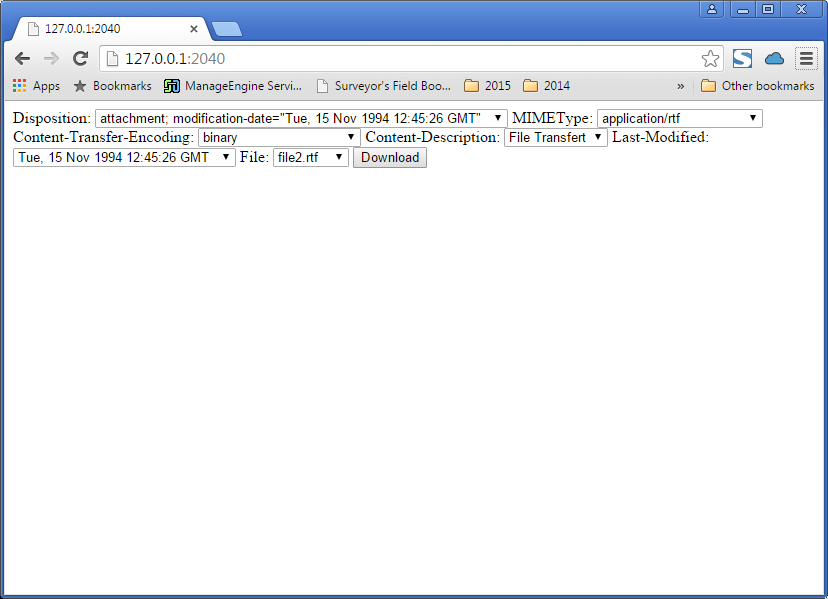

I started to experiment, writing a web-server in Golang that mirrored the delivery configuration of Rosetta, reverse-engineered from what was visible in Rosetta server’s headers. The server code is here: https://github.com/ross-spencer/http-content-delivery-demo

The code can be executed by building in Golang, and then running the resultant executable. The server will listen for requests on port 2040 and can be accessed through 127.0.0.1 or localhost. Please use the former if you’d like to observe the delivery headers for yourself.

The server provides two options specifically relating to content delivery. I have drawn from RFC 2616, Hypertext Transfer Protocol — HTTP/1.1, and RFC 2183, Communicating Presentation Information in Internet Messages: The Content-Disposition Header Field.

Relevant parts that I am interested in are:

RFC 2616, 14.29 Last-Modified

The Last-Modified entity-header field indicates the date and time at which the origin server believes the variant was last modified.

Last-Modified = "Last-Modified" ":" HTTP-date

An example of its use is

Last-Modified: Tue, 15 Nov 1994 12:45:26 GMT

The exact meaning of this header field depends on the implementation of the origin server and the nature of the original resource. For files, it may be just the file system last-modified time. For entities with dynamically included parts, it may be the most recent of the set of last-modify times for its component parts. For database gateways, it may be the last-update time stamp of the record. For virtual objects, it may be the last time the internal state changed.

An origin server MUST NOT send a Last-Modified date which is later than the server's time of message origination. In such cases, where the resource's last modification would indicate some time in the future, the server MUST replace that date with the message origination date.

An origin server SHOULD obtain the Last-Modified value of the entity as close as possible to the time that it generates the Date value of its response. This allows a recipient to make an accurate assessment of the entity's modification time, especially if the entity changes near the time that the response is generated.

HTTP/1.1 servers SHOULD send Last-Modified whenever feasible. ---

And from RFC 2183, the description of a modification-date header field that we have available to potentially affect presentation:

--- RFC 2183, 2. The Content-Disposition Header Field

Content-Disposition is an optional header field. In its absence, the MUA may use whatever presentation method it deems suitable.

It is desirable to keep the set of possible disposition types small and well defined, to avoid needless complexity. Even so, evolving usage will likely require the definition of additional disposition types or parameters, so the set of disposition values is extensible; see below.

In the extended BNF notation of [RFC 822], the Content-Disposition header field is defined as follows:

disposition := "Content-Disposition" ":"

disposition-type

*(";" disposition-parm)

disposition-type := "inline" / "attachment" / extension-token ; values are not case-sensitive

disposition-parm := filename-parm / creation-date-parm / modification-date-parm / read-date-parm / size-parm / parameter

filename-parm := "filename" "=" value

creation-date-parm := "creation-date" "=" quoted-date-time

modification-date-parm := "modification-date" "=" quoted-date-time

read-date-parm := "read-date" "=" quoted-date-time

size-parm := "size" "=" 1*DIGIT

quoted-date-time := quoted-string ; contents MUST be an RFC 822 `date-time' ; numeric timezones (+HHMM or -HHMM) MUST be used ---

An example request to my server look likes the following:

| POST /filedownload HTTP/1.1 | |

| Host: | 127.0.0.1:2040 |

| Accept: | text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8 |

| Accept-Encoding: | gzip, deflate |

| Accept-Language: | en-US,en;q=0.8 |

| Content-Type: | application/x-www-form-urlencoded |

| Origin: | http://127.0.0.1:2040 |

| Referer: | http://127.0.0.1:2040/ |

| Upgrade-Insecure-Requests: | 1 |

| User-Agent: | Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/46.0.2490.80 Safari/537.36 |

We can see above that the last-modified header field is not set in the response from Rosetta, if you compare one sample from my experimental server, and the digital preservation system, we can see how the headers change when configured more purposefully:

| Digital Preservation System | Header Experimentation | ||

| HTTP/1.1 200 OK | HTTP/1.1 200 OK | ||

| Accept-Ranges: | bytes | Accept-Ranges: | bytes |

| Age: | 0 | Content-Description: | File Transfert |

| Cache-control: | must-revalidate | Content-Disposition: | attachment; modification-date=”Tue, 15 Nov 1994 12:45:26 GMT”; filename=file2.rtf |

| Connection: | Keep-Alive | Content-Length: | 2835 |

| Content-Description: | File Transfert | Content-Transfer-Encoding: | binary |

| Content-Disposition: | inline;filename=”Digital Future Summit-video.ppt” | Content-Type: | application/rtf |

| Content-Length: | 21549568 | Date: | Sun, 01 Nov 2015 22:29:37 GMT |

| Content-Transfer-Encoding: | binary | Last-Modified: | Tue, 15 Nov 1994 12:45:26 GMT |

| Content-Type: | application/vnd.ms-powerpoint;charset=UTF-8 | ||

| Date: | Sun, 01 Nov 2015 04:42:13 GMT | ||

| Expires: | 0 | ||

| Server: | |||

| X-CDN: | Incapsula | ||

| X-Iinfo: | 1-906050-905876 SNNY RT(1446352930735 2262) q(0 0 0 -1) r(2 2) U5 | ||

At the first attempt, the Last-modified header could not be set manually using a Set() command in my code. The Golang HTTP server code is too intelligent and ensures that this is set, and more importantly ensures that it is set accurately. As such, I modify the date on the server directly n.b. volatile! The mechanism is similar to using the touch command in Linux, encoded in a native Golang function.

I originally started to test each header field in isolation from the other, and if you run the server code for yourself you can still manipulate the response as you’d like. The response above encodes both a last-modified header field and an extension to the content disposition header field:

Content-Disposition: attachment; modification-date=

"Tue, 15 Nov 1994 12:45:26 GMT";

filename=file2.rtf

Last-Modified: Tue, 15 Nov 1994 12:45:26 GMT

Ultimately, whatever date I chose on the server, whatever header I set – I could not influence the behavior of the browser (Firefox or Chrome, Windows or Linux) – a negative result. But it allows me to draw a line in the sand at the delivery end of the spectrum.

Where does that leave us?

I wanted to share a negative result. I wanted to share my thoughts, and to open up a set of questions, to understand who else is asking them, to orientate my thoughts to understand if it is something to keep in mind in future digital preservation requirements gathering sessions.

Some questions I haven’t been able to answer:

- What does the concept of a last-modified date, associated with the object, mean in an archival context? Is it still important when externalized in other metadata?

- Further, what does the concept mean (a single date attached to a single object) in the context of larger collections of objects? E.g. ingested from an EDRMS?

- Perhaps this bears more relevance in systems that put the burden of rendering onto the user?

- If we could introduce support for this in standard web-browsers, how, when, and where do we manage the modified date inside the preservation system? – Managed movement from deposit through to delivery (micro rsync like operations?) or do we repatriate the object with the date on delivery?

Conclusion

We have the ability to control, or at the very least, understand, and also document, each part of the digital preservation lifecycle if we choose. At the beginning of the development process it is easy to get wrapped up in core feature development – the absolute must haves like continuous checksum monitoring; and later on it is possible to get wrapped up in more theoretical aspects of technology watch and preservation planning while also fighting to get the first transfers into the system.

But a preservation system supports other functions, including, usually, an archival function, and a large number of end-users, who will have different use cases. It is an archive in the purest sense and enables delivery of content to those users. One use case, for a government archive – demonstration of provenance is of utmost importance.

Though, this is a very specific use case.

We do have a record in externalized metadata that describes the provenance of a record. In concert the two will likely be enough for most users. The repercussions of not preserving date on delivery seem to be minimal as it doesn’t look to be happening in practice in the systems out there, including one of the primary options for digital preservation.

If we decide that we need this functionality in future – how do we approach it? First, it needs to be included more often in user requirements for digital preservation systems. As well as that, the browsers that form a large part of our delivery infrastructure will also need to be looked at – does anyone want to help me write an RFC to see another content-disposition option added into the standards for archival content?

Content-disposition: Archival

Let me know your thoughts in the comments below.